Introduction

In the rapidly evolving landscape of artificial intelligence, businesses are constantly seeking innovative ways to leverage AI for enhanced efficiency, accuracy, and competitive advantage. One of the most significant advancements in this domain is Retrieval-Augmented Generation (RAG). In 2026, as large language models (LLMs) become ubiquitous, the challenge isn't just generating text, but generating accurate, contextually relevant, and up-to-date text. This is where RAG systems become indispensable. They address the inherent limitations of LLMs, such as hallucination and outdated information, by grounding their responses in authoritative, real-time data. This article will delve into what RAG systems are, how they function, and why integrating one is crucial for your business's success in the current and future AI-driven market.

Main Body: Understanding Retrieval-Augmented Generation

What is RAG?

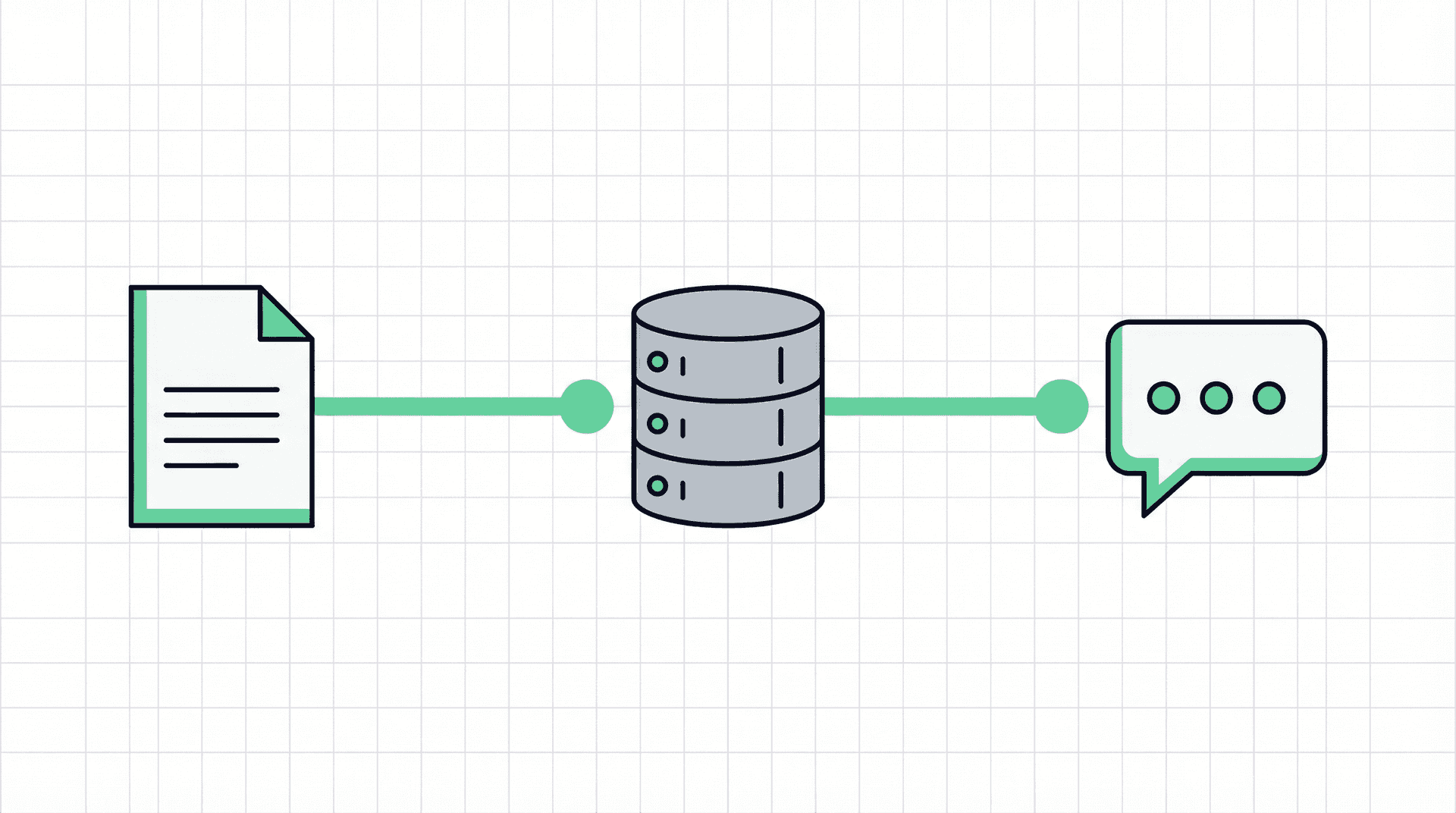

Retrieval-Augmented Generation (RAG) is an AI framework that enhances the capabilities of large language models (LLMs) by integrating them with an information retrieval system. Traditional LLMs generate responses based solely on the data they were trained on, which can lead to several issues: they might generate plausible but incorrect information (hallucinations), provide outdated information, or lack specific domain knowledge. RAG addresses these limitations by enabling the LLM to access and incorporate information from an external, authoritative knowledge base before generating a response [1] [2].

How RAG Works

The RAG process typically involves two main stages:

- Retrieval: When a user poses a query, the RAG system first retrieves relevant documents or passages from a designated knowledge base. This knowledge base can be a collection of internal company documents, databases, or curated external sources. Advanced search and indexing techniques are employed to quickly identify the most pertinent information [3].

- Generation: The retrieved information is then provided to the LLM as additional context alongside the original query. The LLM uses this augmented input to generate a more accurate, informed, and contextually relevant response. This process ensures that the LLM's output is grounded in verifiable facts and up-to-date information, significantly reducing the risk of hallucinations and improving the overall quality of the generated content [4].

Key Benefits of RAG for Businesses

Integrating a RAG system offers numerous advantages for businesses across various sectors:

- Enhanced Accuracy and Reliability: By drawing upon authoritative internal data, RAG systems drastically reduce the likelihood of LLMs generating incorrect or misleading information. This is critical for applications requiring high levels of factual accuracy, such as customer support, legal research, or medical inquiries [5].

- Access to Up-to-Date Information: Unlike traditional LLMs whose knowledge is limited to their last training cut-off date, RAG systems can access and incorporate real-time data. This ensures that responses are always current, which is vital in fast-changing industries or for businesses dealing with dynamic information [6].

- Domain-Specific Expertise: Businesses often possess vast amounts of proprietary data and domain-specific knowledge. RAG allows LLMs to tap into this internal expertise, enabling them to provide highly specialized and relevant answers that would be impossible for a general-purpose LLM to generate [7].

- Reduced Hallucinations: One of the most significant challenges with LLMs is their tendency to hallucinate. RAG significantly mitigates this problem by providing the LLM with concrete evidence from the knowledge base, forcing it to ground its responses in facts rather than generating speculative content.

- Cost-Effectiveness: While fine-tuning an LLM for specific tasks can be expensive and resource-intensive, RAG offers a more cost-effective alternative. It allows businesses to leverage powerful pre-trained LLMs and augment them with their data without the need for extensive retraining [8].

- Improved User Experience: For applications like chatbots and virtual assistants, RAG leads to more helpful, accurate, and trustworthy interactions, ultimately enhancing the user experience and building greater confidence in AI-powered solutions.

The Grid Theory Angle: Building Superior RAG Systems with Custom Solutions

While the benefits of RAG systems are clear, their effective implementation requires a nuanced understanding of data architecture, retrieval mechanisms, and seamless integration with existing business processes. This is where Grid Theory excels. At Grid Theory, we understand that off-the-shelf solutions rarely meet the unique demands of complex business environments. Our approach focuses on building custom systems tailored to your specific needs, ensuring that your RAG implementation is not just functional, but truly transformative.

Our methodology is deeply rooted in the G.R.I.D. framework, which guides our development of robust and scalable AI solutions:

- G - Grounding: We ensure your RAG system is meticulously grounded in your most authoritative and relevant data sources. This involves expert data curation, indexing, and establishing robust retrieval pipelines that guarantee the LLM always accesses the most accurate information.

- R - Relevance: Our custom retrieval algorithms are designed to prioritize and fetch information that is highly relevant to the user's query, minimizing noise and maximizing the precision of the LLM's responses. We implement sophisticated semantic search capabilities to understand the intent behind queries, not just keywords.

- I - Integration: We specialize in seamlessly integrating RAG systems with your existing enterprise architecture, including CRM, ERP, and knowledge management platforms. This ensures a unified data flow and a cohesive AI experience across your organization.

- D - Dynamic Adaptation: The business and data landscapes are constantly changing. Our RAG solutions are built with dynamic adaptation in mind, allowing for continuous learning, easy updates to the knowledge base, and flexible scaling to meet evolving demands. We design systems that can evolve with your business, ensuring long-term relevance and performance.

By focusing on these core principles, Grid Theory develops RAG systems that go beyond basic information retrieval. We engineer solutions that provide intelligent, context-aware, and actionable insights, turning your proprietary data into a strategic asset. Our expertise in crafting bespoke AI architectures means your RAG system will be optimized for performance, security, and your unique operational workflows, delivering a superior return on investment.

Ready to Get Started?

Building the right systems doesn't have to be overwhelming. Grid Theory helps businesses design and implement solutions that actually work, no bloated platforms, no guesswork.

Book a discovery call and let's talk about what this could look like for your business.